For the remainder of my posts here, I’m going to move on to new work, and try out some ideas. This post will continue on the theme of consciousness, but turn to a very different question. The work I will discuss in this post is conducted in collaboration with Will Davies, so hopefully it won’t go too far askew of reality.

There was a lot of excitement generated a few years back when a team of neuroscientists headed by Adrian Owen claimed to detect consciousness in a patient diagnosed as PVS. Their work was extended in a later paper. In earlier work, I expressed doubts that the neuroscientists had detected phenomenal consciousness. But that didn’t lead me to a view concerning the significance of the findings that diverged from the consensus, because I doubt that phenomenal consciousness underwrites the kind of moral significance that underwrites serious moral value. Rather, I thought it was the capacity to have, and to self-attributive, psychological states that allow the person to have cares and projects that underwrites serious moral value, and the kind of consciousness that is needed for this capacity is consciousness as access to informational states.

Building on recent work by (my colleague) Colin Klein, we now think that the central plank of the inference from the data to the possession of (any kind of) consciousness is much less solid than it appeared. Though Klein himself accepts that the patients are conscious, we think that there are grounds for genuine doubt about that claim. Even if Klein is right, moreover, and the patients enjoy some kind of consciousness, we doubt that the kind of consciousness for which the data is evidence justifies attribution of the kind of moral status that is usually attributable to conscious beings.

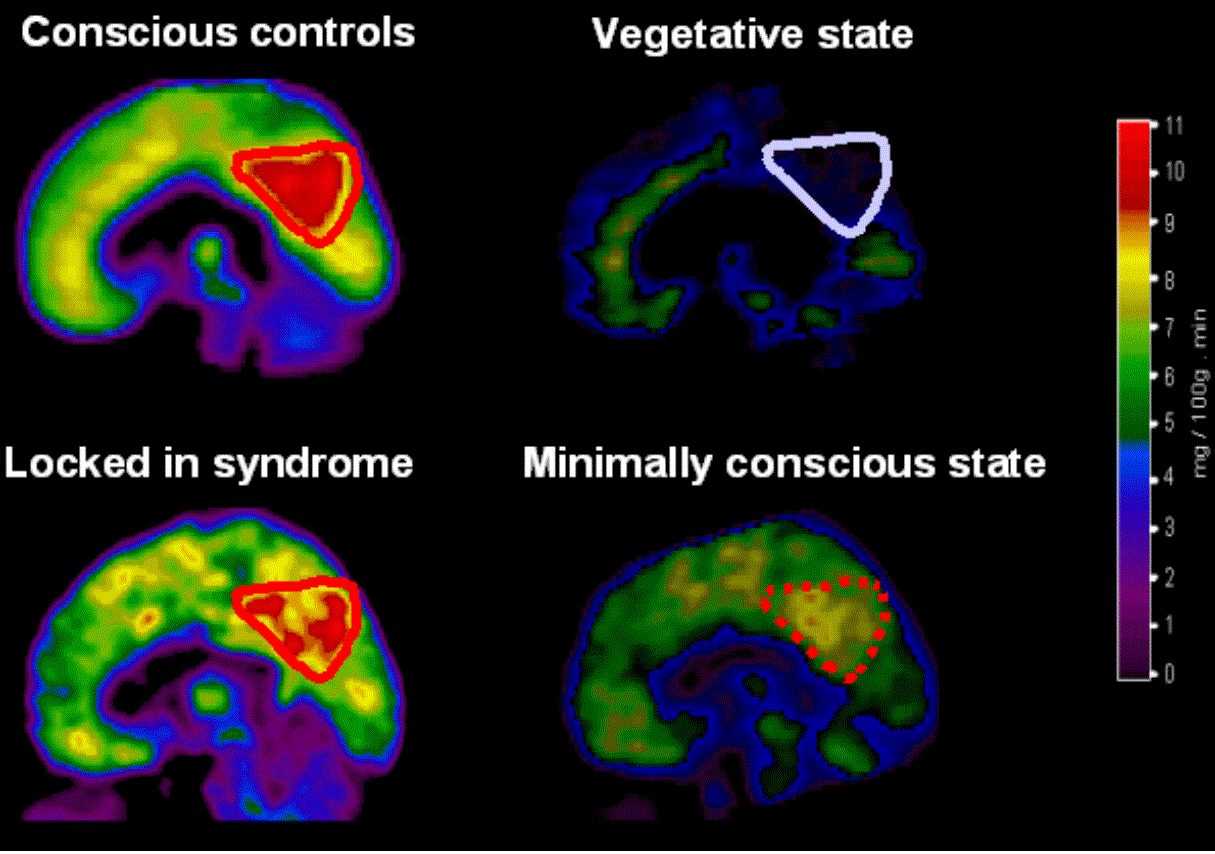

Briefly, there are two pieces of evidence for the presence of consciousness in PVS patients. Owen et al. (2006) showed that the neural activation in a PVS patient who was asked to imagine playing tennis and to imagine navigating her own house was indistinguishable from the neural activation exhibited by healthy controls asked to perform the same tasks. Building on these results, Monti et al. (2010) used the same paradigm was utilized to develop what was, in effect, an fMRI-based communication system, in which an apparently vegetative patient, was able to answer ‘yes’ or ‘no’ to questions by imagining playing tennis or imagining navigating a familiar environment.

The details of the neural activation do not much matter for the inference from the data to the conclusion that the patient is conscious. The data exhibits consciousness, the authors of these papers believe because it exhibits agency. Compliance with the instruction to perform a task requires agency, and agency requires consciousness (Bayne 2013).

This inference looks shaky in the light of two facts, however: the patient group has damage to much the same parts of the brain as patients suffering from akinetic mutism (AM), and AM patients are capable of instruction following and even answering questions. This makes it plausible to suggest that PVS patients in the experiments are complying with the experimenters’ requests in an analogous (or even identical) manner to the way in which AM patients engage in this behavior (for details, see Colin’s paper). This, in turn, makes it reasonable to doubt that these behaviors are markers of agency (I took issue with the claim that agency is a marker of consciousness in my recent book).

As Klein interprets the data from AM patients, they are not capable of endogenous intention formation. Hence their lack of capacity for endogenous agency. Given the right prompts, however, AM patients may engage in complex activity – even something as demanding as reading a test and answering questions about it. If PVS patients are indeed relevantly similar to AM patients, then we may conclude that in the absence of special prompting they are not capable of agency. Since prompting is required for intention formation, and therefore for agency, we should doubt that they are conscious when they are not prompted .

There are some complications here, however. One might think that it is the capacity for agency, and not the manifestation of this capacity, that is evidence for consciousness, and that AM patients have this capacity: on this view, external prompting allows the patient to manifest a capacity, not to develop a capacity they otherwise lack. While this move is somewhat tempting, we think it ought to be resisted. Agency is best understood as requiring the ability to act in a way that is relatively independent of stimuli, and to require more flexibility of response than is exhibited by AM patients. We do not describe plants as agents, because their responses to stimuli are inflexible and fixed. For an analogous reasons, there is room to doubt that AM patients give evidence of agency even when they respond to prompting. The inflexibility of response makes the attribution of agency to them suspect.

Klein himself does not take his alternative explanation of PVS patients’ deficits and abilities to cast doubt on the claim that they are conscious. Utilising a distinction from Kriegel, he suggests that they manifest peripheral consciousness without focal consciousness, where peripheral consciousness is consciousness with very little content. His evidence for this claim depends on the self-report of AM patients. Patients with a less severe form of AM report this curiously empty state; these introspective reports are taken by Klein to be extremely good evidence that they are conscious. Even if this is right (we worry that these reports may be elicited, in the same way as responses to questions, and that it may therefore be question-begging to take them as evidence of consciousness), the inference from consciousness in those who are less impaired to its presence in those who give no sign of it is surely a fraught one.

Klein also cites retrospective reports by patients who recover from AM. In some (but, importantly, not all) cases, they report consciousness of their surroundings and of events when they were symptomatic. Again, we think the inference from evidence of consciousness in a subset of patients who recover to its presence in all AM patients, and thence to PVS patients, is a fraught one. Even with regard to those patients who recover from AM, we may doubt whether their current testimony is good evidence of their past consciousness. These patients have access to information about their past experiences, and these experiences would be, in a normal subject, conscious, but it may be that they were not concurrently conscious in the patients. They are now recalled, let us suppose accurately, but recalling informational content that is normally conscious is not necessarily recalling consciousness. Further, it is difficult to know how to interpret these retrospective reports: what is reported seems as consistent with absence of consciousness as with absence of contents.

Suppose, however, that Klein is correct and AM patients are conscious, and that PVS patients are similar enough to AM patients that we may conclude that they too are conscious. Suppose, further, that they are conscious all (or much) of the time, and not just when they are prompted. Would they then enjoy the moral status that is rightly attributed to normal subjects in virtue of the fact that they are conscious? We suggest that the answer is no. As I mentioned, I think the bulk of the work in underwriting moral status is done not by the capacity for experience per se, but by the capacity to have mental states with the rights kinds of contents, as well as the capacity to self-attribute these states. It is something like access consciousness (with appropriate contents) that is required for having an interest in a life, not phenomenal consciousness. Information must be sufficiently available for rational thought and deliberation in order for a being to be able to have future-oriented desires or to conceive of itself as persisting in time. If this thought is right, then attributing to PVS patients (and to AM patients) peripheral consciousness, a kind of consciousness with very little content, is not attributing to them a kind of consciousness that does the work of underwriting moral status of the kind and degree that is required for personhood or to be a subject of a life.

Insofar as PVS patients have some kind of consciousness, they would nevertheless have some degree of moral status that nonconscious beings lack. If an agent who possesses peripheral consciousness comes to have focal consciousness when prompted (as – I take it – Klein believes), it is reasonable to think that they come to have focal consciousness given other kinds of stimuli. That would suggest that they could experience pain and pleasure, and these states are plausibly of direct moral significance. But they would not have the really significant kind of moral status that is usually attributed to conscious beings. and which underwrites a serious interest in continuing to live.

Thanks, Neil, for this very interesting post.

We (here at Western) read Colin’s paper recently and found it very thought provoking. It’s raised several important questions for our work.

One issue I’m still puzzled by is the scope of Colin’s argument. The paper takes aim at the mental imagery technique first documented in Owen et al 2006. But it seems like the argument may also apply more broadly to neurobehavioral scales used in clinical neurology (of which Adrian’s technique borrows from) . For example, the inferences used in the mental imagery technique — that agency is a marker of consciousness — are the same inferences used in, among other scales, the Glasgow Coma Scale and the Coma Recovery Scale-Revised. So, by the same token, would a patient that satisfies a score for the minimally conscious state on the CRS-R (and, perhaps, has the right type of focal lesions) also be classified as A.M.?

I’m not sure what to think about that. My hunch is that something isn’t right… But, then again, maybe the inferences used in the scales aren’t right!

Looking forward to your work with Will Davies.

I will ask Colin what he thinks, Andrew. I don’t want to abandon agency as a marker of consciousness myself – I think that consciousness is required for a broad swathe of consuming systems to get access to broad range of information. On my picture, what consciousness buys you is domain-generality, and in turn that underwrites flexibility of response. So genuinely and intelligently flexible agency is a marker of consciousness because consciousness is necessary for these things. I don’t mind whether you say that therefore agency is a marker of consciousness (restricting ‘agency’ to such flexible response) or instead use ‘agency’ to mean something less demanding and say that this kind of intelligent agency is a marker of consciousness. Either way, I think this gives us grounds for scepticism about the kinds of inconsistent and undemanding responses that are taken to be evidence for the minimally conscious state, at very least. On my view, overlearned behaviour doesn’t require consciousness (think of automatism) but the kinds of responses required for the diagnosis of MCS may be overlearned.

Thanks! That’s really helpful.

Hi Neil,

As in every discussion of consciousness, there are many points here that deserve discussion. But I’d like to touch on one aspect of consciousness that rarely gets the attention it deserves: the formation of memory.

Normally, when we are conscious of something, our brains form an episodic memory trace of the event, which allows us to answer questions about it later. Normally we take the existence of memory as evidence for consciousness, and the lack of memory as evidence for absence of consciousness. As you indicate in your essay, the validity of those inferences is not obvious; but let me duck that issue by defining a distinct type of consciousness which I will call M-consciousness: the formation of a memory of an event.

M-consciousness is a useful concept because it is (a) clearly defined; (b) experimentally detectable, at least in principle; (c) morally significant. A traumatic memory is bad even if one is not “conscious” when it is formed; conversely, consciousness is not as important if it can’t be remembered.

As you say in your essay, there is some evidence that M-consciousness can occur in akinetic mutism, although it falls short of proof. There is also evidence that M-consciousness sometimes occurs in people who undergo surgery using general anesthesia. In my opinion, when thinking about people in vegetative states, the most important question is whether they can display M-consciousness. Thus far there is no compelling evidence that they can, as far as I can tell.

That’s interesting, Bill. It is tempting to think that one could replace an identity criterion for consciousness with a memory criterion. There’s some evidence in favour: e.g., somnambulists recall very little about the period during which they were sleeping walking (a few fragments, which might suggest that they had fleeting moments of consciousness). Of course failure to recall isn’t evidence that one was not conscious: there are amnesiac agents like Propofol. Similarly, if Prinz is right the contents of consciousness are richer than the contents of working memory (presumably WM is a gateway to longer term memory) but availability to WM requires consciousness.

Thanks, Neil. I fall into the group who think of “consciousness” as an umbrella term. Thus, “Is X conscious?” is rarely a productive question: it leads to people arguing at cross-purposes, each having a different definition in mind. “Which aspects of consciousness does X possess?” is usually a much more productive question — it elicits useful information without such a tendency to dissolve into sterile debates about the true meaning of consciousness.