It’s natural to think we need language to explain—after all, isn’t an explanation an answer to a why question? It can be, but it can also be a resolution of a wondering why state. A paradigmatic folk psychological explanation has these three features:

- FP explanations are constructed by individuals as a response to an affective tension, such as a state of curiosity, puzzlement, fear, disbelief, etc. about a person or behavior. This affective tension drives explanation-seeking behavior.

- FP explanations reduce cognitive dissonance and resolve the tension that drives the explanation-seeking behavior; generating an explanation promotes a feeling of satisfaction.

- FP explanations are believed by the explanation-seeker, and are not believed to be incoherent given the individual’s other beliefs, regardless of whether the belief is true or consistent with those beliefs. (Andrews 2012, 120-121)

Piaget thinks infants are trying to explain their world; Alison Gopnik does too. So how can we explain without language? The same way we think without language, whatever that is. Here’s a simple example. A puzzle box intrigues you, so you begin to examine it, seeking to find out how it works. When you get the secret, say, tapping on the top to break a magnetic connection, your curiosity is satisfied. Sure, you can put the explanation of how the box works into words, but that’s no reason to think you didn’t have an explanation—a belief about how the box works—until you formed the words.

Now lets take a folk psychological example from Tad Zawidzki’s book Mindshaping which I mentioned in the previous post. Tad and I both think that belief reasoning is implicated in our offering reasons for action more so than it is in predicting behavior. But Tad thinks that we need full-blown language to offer exculpations—excusing or justifying explanations of behavior. He gives an example of an early human group, with a scout who returns to the group and indicates that there is a herd of animals to the north. The group goes north, but there are no animals there. The scout’s reputation is at stake, because, in effect, his promise is unmet. Tad says that to repair his reputation, the scout can offer a narrative in terms of his beliefs—something like, “it was dark, I went south, and believed that I was going north”. But it seems to me that the scout doesn’t need to exculpate in terms of his beliefs—if they ended up in the wrong place, he can say, “this is the wrong place.” Or, rather than saying “it was dark and I thought I was going north,” he could merely point south and say “north”. The same message is transmitted, and there is no less of a reason to forgive him in the second case, even without reference to his belief.

Not only do we not need to talk about beliefs to explain our errors, but we don’t need to talk at all! Suppose the early humans don’t yet have language, but have sent the scout out to look for prey. The scout comes back, and indicates the location of the prey by pointing and pantomiming big game. Maybe the scout even has a communicative device to indicate approximately how long a walk it is to the place where the game is (something like the bee dances). But when the hunting party arrives there, the game is not there. It’s the wrong place. The scout leads the party back to a landmark where he made a wrong turn, and indicates through gesture and vocalization that they need to go a different way here. He is showing the group that he got lost at the landmark.

I haven’t seen animals explaining their own behavior in this way, but many animals can recognize their errors—a topic for a future post. And many animals communicate; great apes are even known to pantomime— yet another topic! So I think it is worth investigating whether animals seek to explain their behavior, or the behavior of others, in order to examine whether they mindread. While not all explanations of behaviors will be in terms of reasons, certainly some are.

The research on chimpanzee theory of mind has focused on prediction, and both Robert Lurz (in his book Mindreading Animals) and I have argued that the experiments purporting to demonstrate chimpanzee mindreading can be explained in other ways (several psychologists have also made this claim, including Daniel Povinelii and Jennifer Vonk). I need to stress, though, that alternative explanations alone don’t give us evidence that chimpanzees don’t mindread. Rather, to make the case stronger either way, one needs different kinds of evidence that, together with the predictive evidence, supports a hypothesis about chimpanzee mindreading. If chimpanzees seek to explain another’s behavior, and that behavior is only comprehensible in terms of beliefs, then we will have another source of evidence supporting the hypothesis that chimpanzees do mindread. I develop this argument in the penultimate chapter of Do Apes Read Minds, and in my 2005 Mind and Language paper “Chimpanzee theory of mind: Looking in all the wrong places.”

What’s interesting to me about nonlinguistic explanations is not that they are some kind of strange representation, because they’re not—they’re just a kind of belief—but that they suggest the existence of a normative sensibility. It’s the drive to explain that is so interesting. Without some initial motivation to explain, there will be no explanation seeking or understanding. And for adults across species who already understand the normal actions of their peers, the motivation to explain will usually come from a strange or seemingly wrong behavior. So this means that the adult has an ability to discriminate between strange and normal, wrong and right. It means that there is at least a naïve sense of normativity that creates the affective tension that motivates one to seek explanations. And this means that another source of evidence for chimpanzee mindreading would come from evidence that they have naïve normativity. Naïve normativity will be the topic of my next post.

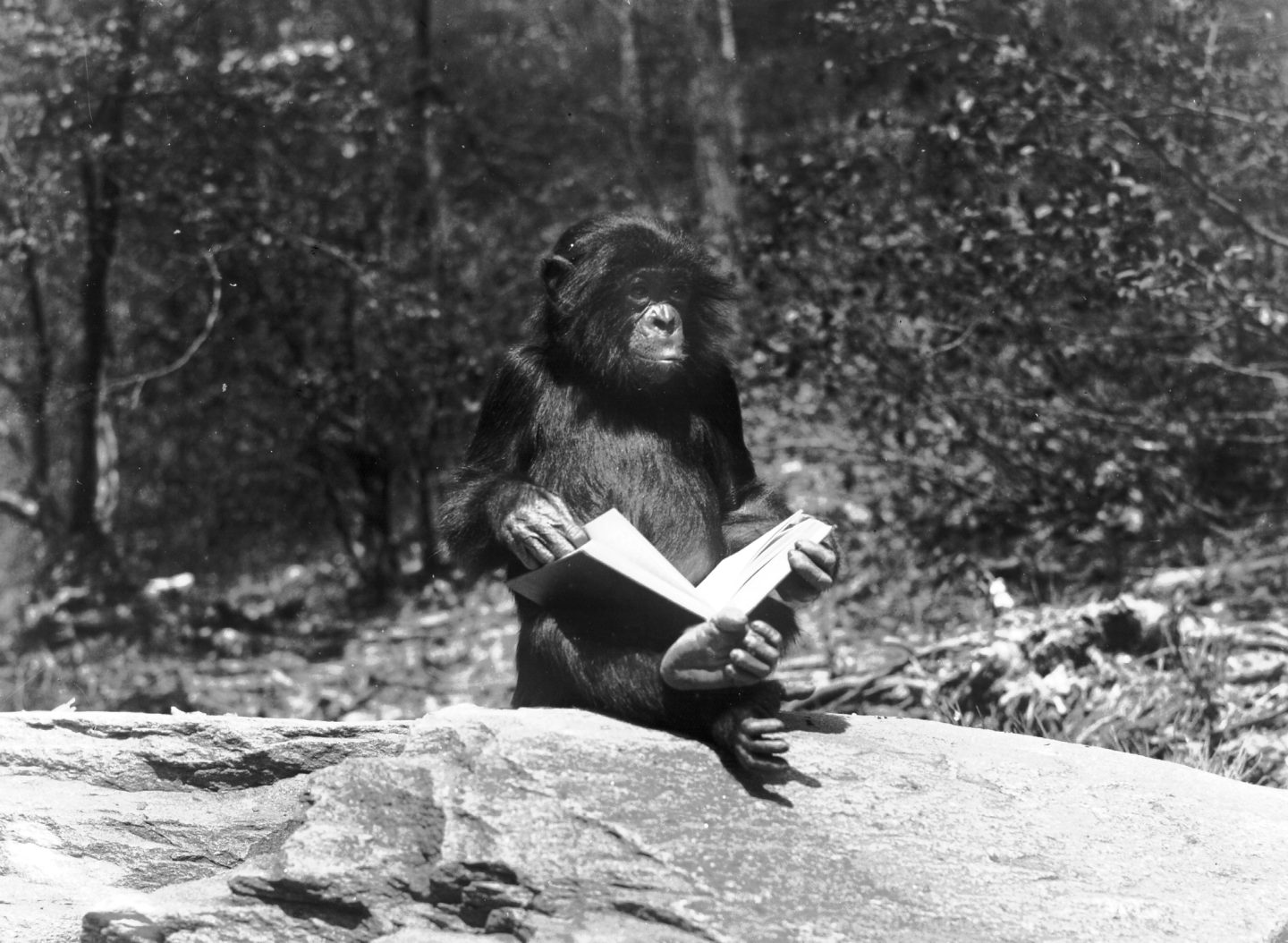

Note: The cover photo for this post is from the Yerkes archive. The story goes like this: Prince liked to watch students on Emery University campus, and he noticed that they prized these objects we call books. One day he got his hand on a book, and ran up to a rock to examine it. Prince leafed through the a book with a puzzled expression on his face, trying to figure out what the students found so interesting. Thanks to Frans de Waal for the photo.

Kristin,

Thank you so much for your awesome posts here. But they are coming too quickly! I wish I had more time to respond.

One quick thought here because I can’t resist, and because I’d appreciate input from others-

I’ve always been sympathetic to your idea that explanation is a much neglected role for folk psychology, and that looking for explanatory rather than predictive uses of folk psychology could be a promising way forward when thinking about experiments that could distinguish FP hypotheses from non-FP alternatives in animals. But I’ve also still (maybe stubbornly) thought that this is all consistent with believing that a lot of the existing work looking for predictions is still on the right track, and even that the predictive and explanatory uses of folk psychology are tightly related.

Now, maybe this is just because I have predictive coding on the brain (sorry)–both because of Hohwy’s previous posts here, and because I’m organizing a reading group here tomorrow on the topic–but I wonder if redescribing all these phenomena in terms of predictive coding would show how these two sides of folk psychology are intimately related. Namely, the predictive coding wonks emphasize the bidirectional nature of the hierarchical cascade between incoming sensory inputs and predictive outputs generated by a top-down model of the environment. Crucially, the top-down model is always trying to predict the incoming sensory signals by building up an increasingly efficient causal model of the environment (each layer predicting the inputs from the previous layer), and error signals are only generated (and, correspondingly learning occurs) when top-down predictions of sensory inputs fail to match the actual sensory input. Redescribing what’s going on in your three stage process for FP explanations in these terms, then, one might say that the predictions presupposed by stage 1, because it’s a mismatch between predicted and observed psychologically-relevant cues generating the tension that begins the search for explanations. For why else would they be surprised, curious, puzzled, disbelieving, etc by what they observed? In this sense, while you might be right that prediction is not the *primary* function of FP, its predictive and explanatory functions necessarily go hand in hand. The remainder of the explanatory cycle would then be assimilated in terms of the organism’s iterative adjustment of the hierarchical model to account for the unexpected inputs, adjusting the priors so that the same outcome in the same circumstances would successfully be predicted in the future. (Resistance is futile.)

Do you have any thoughts on the right way to look at this? I could see the following worry: there’s an equivocation in what I just said about ‘prediction’, where the predictive coding theorist means something lower-level or computationally different (e.g. through different levels of explanation, or presuppositions about the architecture of the predicting mechanism, or involvement of attention or general-purpose cognitive resources…). Relatedly, if one buys the personal/subpersonal distinction, one could complain there’s a similar kind of equivocation when I say that the attempt to explain away the error signals in this case (by “changing the priors” to predict them) is what you mean when you say that an animal might be trying to “explain” the unexpected behavior.

Of course, when it comes to the “do any nonlinguistic animals have FP question?”, the issue is still whether the models they’ve generated represent psychological or non-psychological causes, whichever way we come down on this predictive coding question…

Just wanted to say that I love this cover image and the accompanying story! I m visiting family in Atlanta right now and took my children today to the zoo, where the gorillas are just amazing. They are connected with the researchers at Emory, from what I understand.

Hi Kristin,

I like the general point that explanation can happen without language, but I also think they can happen without some of the other stuff you mention as well. Specifically, why think that FP explanations need to be prompted by an affective tension? Couldn’t it be that we just spontaneously and automatically interpret certain events according to our folk psychology, whether or not we’re confused/curious/etc? Perhaps we get curious when our automatic FP explanations yield anomalous results, and we have to put more conscious effort into resolving that anomaly. But that seems like a special case of the much more frequent, ordinary phenomenon of applying our FP theory accurately whenever we encounter other agents (or introspect about our own behavior).

Hi Cameron, thanks for your comment. Yes, absolutely the drive to explain paradigmatically arises when our predictions break down—I expected my mother would be at the restaurant but she wasn’t. Now I need to explain. And my explanation will lead me to make further predictions. That’s all fine. My beef is with the idea that FP predictions and explanations are symmetrical. I predicted that my mom would be at the restaurant because she said she’d be there, and I used the people do what they say heuristic. (That is, I didn’t predict she’d be there because I attributed to her a belief that we were meeting at the restaurant and a desire to meet me, the way standard accounts of folk psychology would have it.) But to explain why she didn’t show up I can’t just use that heuristic. My explanation need not be a belief attribution—I might wonder if the subway was delayed. But if we had a fight the last time we spoke, I might also look for folk psychological explanations, in terms of her moods or emotions—she’s mad at me—or her propositional attitudes—she wants to teach me a lesson.

I do suspect that there is a lot of cross level stuff going on in our talk of prediction and explanation. We are great pattern recognizing systems who predict subpersonally. We can also deliberately formulate predictions when engaged in explicit theoretical reasoning. But, in our quotidian interactions with other people, we probably are not automatically ascribing beliefs (see the automaticity of belief literature). And since FP explanations are deliberately generated they would be at the personal level. I don’t think of rejigging our subpersonal predictive system in the face of failed predictions as offering explanations, just given the way I’m describing the phenomenon I’m interested in as causing affective tension and leading to seeking beahviors—that’s all on the personal level. If you’re suggesting that such rejigging is a kind of explanation, that’s an interesting idea. I’d want to know what we’d get out of calling the rejigging a belief (since explanation is just a kind of belief). Do you think there are benefits to seeing explanations at a subpersonal level (granting the personal-subpersonal distinction)?

I always need to repeat that not all explanations are in terms of propositional attitudes. So yes, an animal might explain behavior in other terms. That’s why I’m having such a hard time coming up with a possible experiment that would offer confirming evidence of chimpanzee mindreading. In conversation with Sara Shettleworth and Frans deWaal, we came up with a kind of test: Chimp A observes Chimp B appearing to be afraid of a banana, but there is also a barrier that blocks Chimp A’s view. If Chimp A approaches and looks behind the barrier, he sees a snake. So we might have the affective tension, the seeking behavior, and the resolution of the affective tension once an explanation was generated. But just as I think humans often explain in terms of the situation, Chimp A could be explaining Chimp B’s fear in terms of there being a snake there. (Though, in Bertram Malle’s studies on verbal FP explanations, he codes explanations that look to me like situational explanations in terms of belief explanations with a suppressed attitude attribution—e.g. “he’s not eating the cake because it’s high calorie” gets counted as a PA something like “he’s not eating the cake because he doesn’t want to eat the calories.”)

It would be great if someone could come up with a nonverbal explanatory scenario that is naturally explicable in terms of belief reasoning! Any takers?

Hi Evan, thanks for your comment! The reason why I think FP explanations are prompted by an affective tension (paradigmatically) is that they are, as I said in my reply to Cameron, at the personal level. And I certainly don’t think that FP explanations in terms of belief attribution are automatic. Even German and Cohen, who were touting the automaticity of belief attribution, have backed off that general claim and following Apperly and Butterfill’s suggestion that there are two systems of belief tracking, they suggest that future research should examine the various parts of our belief tracking systems to determine the stimulus conditions and task constraints that give rise to difference signatures. After the decomposition, perhaps we can examine which parts of these systems are automatic.

The automaticity of attributions of moods or emotions or automatic activation of stereotypes or social norms permits our amazing predictive abilities. But when they break down, we need the deliberative explaining of that failure—maybe in terms of beliefs, or, by revising an automatic attribution of mood, emotion, or maybe by recognizing that our stereotype or norm failed us.

Also, let me note that I say FP explanations paradigmatically have these three features because one can generate FP explanations in other ways. If you ask me why my mom came to lunch, I can answer the question without affective tension, without explanation-seeking, because in that case the explanation is ready to hand: because she told me she would meet me for lunch. But if you pressed me, and asked for a further explanation, asking “why did she want to meet you for lunch?” I might then wrinkle my nose and have to think a bit, generating an explanation that I didn’t have before, because I hadn’t asked myself that question.

In the book I argue that there are reasons to think our PA attributions are less accurate than our attributions of nonpropositional mental states such as emotions, or our predictions based on social norms or other generalizations. When our predictions come out wrong and we want to explain why, we are generating a hypothesis. Given that there are many belief-desire sets that are compatible with any one piece of behavior, the likelihood of our getting it right on the first go may be low. What we would really need to increase our chances of getting it right would be to generate and compare a number of different hypotheses. But we also know that humans suffer from a confirmation bias that leads us to accept the first hypothesis we generate. It’s the Three’s Company problem—Mr. Furley always generates a false hypothesis to explain Jack’s strange behavior, and he isn’t able to correct it unless someone in the know tells him what’s going on. I think our untested explanations for anomalous behavior often lead us astray, and that we’re all better off if we test our hypotheses, ideally by talking to the person whose behavior we’re explaining. That person might not know her reasons for action either, but she’ll probably have some background information that would be helpful, at the least!

Hey Kristin –

Very cool post. I don’t want to deny that nonlinguistic cognizers seek explanations. I hadn’t really thought about this issue, but you make a compelling case. But I still deny that they seek FP explanations, where these are understood in terms of the attribution of full-blown propositional attitudes, i.e., as philosophers tend to understand them: holistically linked to behavior/observational circumstance, intensional (same referents can be represented under different modes of presentation), and presupposing a behavioral appearance / mental reality distinction (the same, counterfactually robust behavioral pattern can be understood as the product of radically different mental states). There seems to be evidence that nonhumans are very bad at the appearance/reality distinction, even in physical cognition (I’m drawing on Povinelli’s recent book here). I’m not sure how you’d test for a mastery of intensionality without language. And the point about holism is really closely related to the appearance/reality distinction: any behavior is compatible with any attitude attribution, given sufficient compensatory adjustments to background attitudes. So why think nonlanguage users ever explain in these terms? I acknowledge that they may encounter situations that induce dissonance, but my guess is that it’s always dissonance about which of two perception-based stereotypes are applicable. Is this artifact manipulable in this way or that? Is this conspecific’s behavior part of this larger pattern or that? These are explanatory itches, but they’re not questions about an unobservable etiology, consisting of states with propositional content, it seems to me. Why would you need to explain in such terms in the wild? The advantage of making such full-blown FP explanations parasitic on language is that you already have public language sentences with intentsionally individuated contents to which to relate agents. I guess this is the insight I take from Sellars’s Myth of Jones – once there’s language, you can use it as a model of the unobservable etiology of intelligent behavior, and this automatically explains a lot of the puzzling aspects of PA attribution. Of course, I disagree with Sellars’s idea that Jones was a scientific genius. In my view, following Bruner, I think, he was more of an innovative advocate, coming up with justifications of apparently anomalous behavior to rescue status.

Hi Tad— Thanks for the opportunity to talk about all the cool things apes can do!

First though, I’m not nearly as convinced by the null results in experimental settings as you are when it comes to chimpanzee cognitive abilities. Null results mean that we haven’t been able to reject the hypothesis, not that we have confirmation of some absence. Maybe after a lot more research, and research in the field as well as in the lab, we’ll be justified in saying that chimpanzee don’t make appearance/reality distinctions, or don’t have intrinsic motivation to imitate, etc., but right now we’re not at that place. Brian Huss and I have a recent paper on this point; you can download it via my website.

Further, there is positive evidence that chimpanzees does make the appearance/reality distinction—Carla Krachun has some nice work on this, and a paper with Robert Lurz on whether chimpanzees use the distinction when predicting other’s behavior. Some chimps can tell that a magnifying lens makes a small grape look big, and they will choose the bigger grape even though it looks smaller (Krachun, Call, & Tomasello 2009). Or are you not convinced by these studies? Butterfill and Apperly also have some nice suggestions for how to test appearance/reality nonverbally, in their Mind and Language paper.

For some background on Povinelli’s book Folk Physics for Apes, you can look at Colin Allen’s book review, and the even harsher book review by Marc Hauser. (I think that’s the book you are referring to.) Chimpanzees probably do have much greater causal reasoning abilities than what Povinelli suggests in that book. For example, the research that finds less overimitation in chimpanzees than in human children can be explained in terms of chimpanzees recognizing causal relations. But that they will overimitate in opaque contexts also suggests that they may recognize that there are hidden causes. See a video of chimpanzee imitation compared with children’s. The great apes are used to hidden things, too—food processing is all about getting at the hidden goodies inside (coconut meat, palm hearts, ginger shoots), so there is no reason to think that invisibility offers a particular problem. Chimpanzees apparently can infer that food is hiding under a slanted board and not a flat one (hidden cause), and that other chimpanzees will also make that inference (Schmelz, Call and Tomasello 2011).

There are two hypotheses that I know of about why other great apes would need to think about appearance vs. reality in their natural conditions. One is Robert Lurz’s theory that the forest is full of illusions, and that apes who know that an object appears to look like something it is not will enjoy certain benefits. This is consistent with the Machiavellian version of the Social Intelligence Hypothesis, and like the example I talked about earlier of the baboons who have sneaky sex and appear to know how to visually and auditorily hide themselves from the alpha. Monkeys are sensitive to the effects of visual and auditory cues on others (Santos et al. 2006; Flombaum and Santos 2005). And then there’s my hypothesis which is a more cooperative version of the SIH, that knowing that things might not be as they seem is a means for developing new technologies. It might look like you are destroying our monkey meat in the fire, but actually you are making it taste better, e.g.. Technological innovations are not always transparent, pace Kim Sterelny, and in a world where opaque behaviors offer real value is a world in which offering reason explanations for behavior would be valuable. Maybe that is a world some chimpanzees live in. When Crickette Sanz found that the chimpanzees of the Goualougo Triangle make tools that are used to make other tools, she gave us an example of how opaque the chimpanzee’s technological world might be. See her cool work here.

I guess I’d say there are appearance reality distinctions and then there are appearance reality distinctions. I’m quite willing to concede that nonhumans can appreciate that something can appear in a misleading way. But the kind of appearance/reality distinction relevant to PA attribution as most philosophers since Sellars have understood it strikes me as radically different. The idea is that there is a realm of unobservable states modeled on, but still very different from some observable domain. So, for Sellars, it’s the idea that there are mentalese sentences that have some but not all properties in common with public language sentences, just as molecules are hypothesized to have some but not all properties in common with little toy balls. In addition, any observable evidence is compatible with radically different configurations of these hypothesized unobservable states. The idea that there is this unobservable realm of exotic states and objects modeled on though still very different from some observable domain, that varies independently of any observable states, is the foundation of theorizing in science. And it’s what people like Sellars and Lewis took adult humans to be doing when attributing PAs. I can’t imagine what evidence could show an appreciation of this kind of appearance/reality distinction in nonhumans.

And there’s mindreading and there’s mindreading! I think it’s an ability on a continuum, and that even older kids don’t have all these sophisticated logical properties of PA attributions working well. It’s not just the opacity stuff I cited earlier, but also responsiveness to reasons stuff that I think Lurz discusses in his book. But kids do attribute PAs much earlier–at least they talk as if they do. So something has to give. You might reply that the kids aren’t really attributing PAs because they lack some of the richness of the logical properties. But I find that response really strange. (And I explain what’s wrong with that response in my papers challenging Davidson with the case of autistic speakers.) Or you might agree that there is development in one’s understanding of PAs–and that not everyone may be able to reach the pinnacle capacity. That’s the position I favor. So I’m taking Morgan’s Challenge to heart and trying not to compare the highest level of human cognitive operations with animals, but instead looking at less demanding human capacities that are still intelligent (and super interesting!)

Two points.

i) Admittedly, figuring out how to interpret personal-level claims in the language of predictive coding is the aspect of the view most needing more philosophical development; I wish one of the folks specializing on it would comment here, since I’m something of a newcomer. But another way to look at it might be as a challenge to our standard distinctions about explicit vs. implicit psychological capacities. If what’s needed for an FP explanation on your view is “affective tension and leading to seeking behaviors”, the predictive coding theorist will claim to deliver all you could hope for, noting that prediction failure will generate affective tension and that seeking behaviors, even low-level attentional mechanisms in perception, will be driven by the attempt to reduce prediction error as a result. And “attention” will characteristically be drawn to the unexpected stimulus, understood in terms of upping the gain in the perceptual error signal for the surprising inputs.

Does that make the “surprisal” conscious? Does this count as personal level suprise? Or do we require something further?

Of course, an agent could be pushed into a surprisal state by the failure of a top-down model which was not driven by representations for psychological states and then “explain” the surprisal in terms of psychological states. But this raises some obvious questions–1) if the agent had the representational resources to offer FP explanations, why wouldn’t it also be routinely using those representations to generate top-down predictions, and 2) what signals that a psychological explanation is the most plausible candidate to explore when the failed prediction was not generated by a psychological hypothesis?

ii) Intersecting with Tad’s comments also, though, I’ve argued that there’s a lot of diversity in the literature in terms of how much sophistication a theorist presumes for something to count as “genuine” FP. While a lot of philosophers do indeed presume whole hog FP of the sort Tad specifies, there’s also a venerable tradition in philosophy and psychology of implicit FP/mindreading, treated as something akin to tacit/folk physics (e.g. Stich & Nichols 1992). And it is typically this sort of implicit FP capacity that proponents of nonhuman animal FP think animals possess. (While skeptics usually presume something more akin to the uber-intellectualized model Tad sketched.)

While we could treat FP as akin to explicit scientific theorizing, that would make it vanishingly rare in even human cognitive lives. If it ever seemed plausible that routine adult FP is of this fully explicit theoretical reasoning sort, it was due to something like James’ psychologist’s fallacy–presuming that the folk think about others’ psychological states the way that psychologists and philosophers of psychology think about them at the office. Rather, everyday human FP is much less demanding–a point Penn & Povinelli have more or less conceded. Even when we’re reasoning about physical causes, we very rarely explicitly reason in terms of unobservable causes–rather it’s the sort of activity that goes on when one sees a violation of expectations in tacit physics experiments, or like Kristin’s example of playing with the puzzle box.

Of course, by now there are a variety of different models for FP with testably different architectures, and one can be interested in whichever candidate one finds most interesting–the important point is that we keep straight who is advocating for which model in their claims. But if we were interested in the evolution of human social cognition, and on the kinds of pressures that would favor certain sorts rather than others, we’d do well to focus on a sort of faculty actually deployed in the vast majority of human social interactions in which we flexibly and appropriately react to the psychological states of others.

Cameron,

A couple of things on your first point. My interest in explanation is in the quasi-scientific aspect of generating them. So here I’m following the Gopnik line to a certain extent. In my discussion of explanation-seeking, the behavior is all very deliberate, including

• Touching objects

• Manipulating objects

• Observing attentively

• Visual awareness of the environment

• Detailed observation

• Aural awareness of the environment

• Listening attentively

• Asking questions

• Searching for answers

• Using different methods to search for answers (Chak 2007)

So I think that the old TT and ST debate’s focus on the subpersonal is also problematic. We developed the of belief and desire deliberately in order to explain, justify, make sense of behavior in order to normalize it. And at least some of what’s going on in the animal literature assumes mindreading is on the personal level, right, like when Povinelli says that apes don’t solve problems by considering invisibility, so they wouldn’t solve social problems by thinking of invisible mental causes (yes, the skeptics may be more aligned with this idea than the optimists).

My focus on explanation is two-fold—it’s part of the origin story, and it’s also part of a methodological suggestion. I don’t think that anyone out there has great facility explaining in terms of mental states without also being able to predict in terms of them. If the agent were able to explain, that the agent should be able to predict, too. If we have evidence in favor of mindreading that only comes from the predictive realm, we have weaker evidence than if we have evidence from prediction and explanation. So I’m denying the assumption behind your last two questions of (i), and trying to reemphasize that the focus on explanation seeking in apes is a methodological suggestion to deal with the epistemic question. But the first part of question 2 (“what signals that a psychological explanation is the most plausible candidate”?) is a live question for me. If A is seeking an explanation to an opaque behavior, and then imitates both that behavior and the appropriate next behavior (that he never saw demonstrated) that might suggest that A understands the target’s goal, and that’s something!

On (ii), I agree that there is a lot of miscommunication about what one is actually after in the mindreading literature. I like making mindreading—not FP—akin to explicit scientific reasoning because it offers a different way of meeting our social demands. The focus on cognitive architecture is too speculative for me. We humans do explicitly think about the causes of other’s behaviors in terms of their desires or beliefs, but not in our quotidian interactions with others. And again, the confirmation bias suggests that we wouldn’t be very good at predicting if we were regularly relying on an implicit hypothesis generation method. But we are good at our quotidian predictions, so we need another explanation. The Pluralistic Folk Psychology approach directs us to study not a single model that is supposed to describe all social interactions, but a plethora of faculties that humans use on a regular basis to handle the demands of a complex social world. Among these faculties is some belief reasoning faculty/faculties, one of which clearly develops over childhood—and there are probably great individual differences at how good one is using these concepts—but that’s alongside the faculties that generate models of individuals in terms of their personality traits, past experience, social roles, relationships, and embedded in the society that they are in. My suggestion is that the creation of these models, and the manipulation of these models better explains our quotidian social interactions with individuals we know. I also like Heidi Maibom’s idea that social expectations can account for a lot of our more general expectations of people—that students will show up to class, that the taxi driver will take you where you want to go, etc.

Kristin,

Thanks for the fantastic and thorough response! This has been a helpful discussion for me. The only point I might still push on is that I think some of the developmental literature has also been overinterpreted, and I still think whatever children, animals, and quotidian adults are regularly doing falls short of explicit scientific theorizing about unobservable causes (which I think largely comes for free once language has rewired a brain implementing the tacit system I suppose available to nonlinguistic agents). We both want to understand the nature of a nonlinguistic prediction/explanation system, and my preference is to gradually add components to low-level representational systems until they can display the needed behavior–probably due to my AI background, I trust models, even if speculative, better than other sources of evidence for what’s going on when we deploy FP. I think we could build a system that satisfies most of the features on your list without counting as explicit scientific reasoning. For example, when faced with a particularly tricky puzzle, I’ve deployed a “guided futzing” strategy that meets most of those points, but when asked to verbalize the strategy I used to solve the puzzle, or the strategies I attempted, be at a loss for words–in the same way a subject making a prediction in a tacit physics experiment might struggle to articulate the e.g. impetus principles that led to the false prediction. In other words, I think we can form and deploy robust causal models of our environment, implementing intelligent search strategies, searching out evidence that is informationally valuable given prior expectations, and intelligently revise those expectations as a result of the evidence observed, without any of those principles ever being explicitly formulated or reflected upon. I believe this because I think there is good empirical evidence that our subpersonal systems are a lot smarter than many have thought they are. However, if this counts as a deliberate or personal-level FP strategy on your view, then perhaps we’re just talking about the same kind of system from two different directions. (I agree completely on your methodological point and re: pluralism.)

I’ll need to think a lot more about the point regarding confirmation bias, which is a very interesting concern. My initial sense would be that quotidian FPs work in the same way heuristics do-put crudely, they do well enough on tasks with the same structure as commonly encountered problems, but tend to fail (exhibit bias) elsewhere. The kinds of systems I’ve been discussing that implicitly induce a causal model of the environment are good at context-sensitivity, parallel satisfaction of many different constraints, and nonmonotonic inference (a notably disjoint set of virtues from what I think of as the explicit, serial, scientific theorizing system). Something like confirmation bias could also manifest in such an implicit system (because in Bayesian terms, the system’s expectations would always be colored by priors, so there would certainly be a major effect of inertia and it would be hard to revise expectations in which we are very confident), but it would probably manifest in a different form than it does with explicit serial theorizing, where the agent has an (often public) epistemic and emotional dedication to some stated hypothesis. In short, we might expect a lot more context-sensitive, holistic flexibility to ongoing evidence, and a lot more willingness to revise the “hypothesis” on the fly (though it might still be really hard to “see” disconfirming evidence).

This is admittedly all very highly speculative when applied to any particular case, but inspired by lots of work on the architectures that various linguistic and nonlinguistic systems would exhibit.

Cameron, thanks for your posts! I am sympathetic to a lot of what you say, and I’m glad that you have the background to do (or at least think about) the modeling. It’s just not an area I have any expertise in, so I feel that *I* would be speculating if my focus were to be on issues of architecture. I like your thought to start with a very simple model and push it to its limits before adding a new piece and seeing how far that gets you. That method can also help us see when we need to add an entirely new model.

I also agree that the developmental literature falls prey to overinterpretation; that’s a particular peeve of mine. Sometimes it seems that the kids are smarter than some other species because the interpretive rules are changed from species to species.

On the use of b/d reasoning, I am going to be stubborn. I think there are other ways for us to make the predictions we do. Yes, they are simple heuristics that break down. But we need those in order to form the b/d set that we’d be attributing to make the prediction. I’m just not sure how the postulation of PAs offers any additional predictive power…the old worry of the work done (or not done) by intervening variables. Of course, in rich mindreading situations I’m going to be a disadvantage if I don’t read your mind back. But in a world where there was no mindreading, what predictive power does it initially get me that the other heuristics don’t already provide? It was working through that question that initially led me away from prediction toward explanation as the place where mindreading first makes a difference.

Sorry I haven’t been participating. Forgot to check the notify boxes. I have a lot of sympathy with Kristin’s point that kids probably don’t think of mental states in the way that Sellars and Lewis did either. So I guess my general point is to argue for a disjunction: either deny that nonhumans, and human infants and kids, and human adults in their unreflective quotidian social cognition, attribute PAs, or give me a different account of what it is to attribute a PA.

I guess I prefer to reserve the term “PA attribution” for the full-blown practice, and then call the stuff we and nonhumans do most of the time “adopting the intentional stance”. It’s just not clear to me what kind of behavioral evidence from interpretive tasks, or bouts of real world interpretation could show that interpreters are thinking about an unobservable realm of internal causes of behavior with the kinds of properties that PAs are thought standardly to have. I cheerfully grant that they are often looking for explanations and justifications, rather than predictions. And I grant that they have science-like “aha!” moments. But all of this can be explained in terms of observable, though sometimes highly abstract, behavioral patterns, it seems to me. And that’s just not what we mean by PAs when we think about it reflectively.

” It’s just not clear to me what kind of behavioral evidence from interpretive tasks, or bouts of real world interpretation could show that interpreters are thinking about an unobservable realm of internal causes of behavior with the kinds of properties that PAs are thought standardly to have… But all of this can be explained in terms of observable, though sometimes highly abstract, behavioral patterns, it seems to me.”

Hey Tad,

In defense of us folks who think that say, preverbal infants are representing agentic behavior using something an awful lot like PAs: these explanations in terms of observable patterns that you mention are usually pretty post-hoc and under-predictive. Sure, you can always come up with some kind of associative or behavioristic explanation of infants’ looking time behavior in FB tasks, but as far as I can tell, every individual proposal of that nature has been refuted by further experimental controls. More robustly mentalistic accounts, in contrast, tend to do a pretty good job at predicting what infants will actually do. So while you may be right that there’s no one behavioral finding that will definitively show that infants are attributing PAs, that explanation still seems to do better than the alternatives when it comes to basic empirical and predictive adequacy.

The middle ground between the two might be something like Butterfill and Apperly’s minimal mindreading account, which falls short of “full blown” PA-attribution, as you say. I’m pretty sympathetic to the view that the structure of the representations that preverbal infants apply to agentic behavior might be very different from the logical structure of propositional attitude attribution in natural languages like English (e.g. embedded propositional structures). But even if infants are representing something like a registration relation or adopting a teleological stance, doesn’t think still count as positing unobservable, mental causes of behavior? I don’t think that “full blown” PA attribution needs to be the gold standard for having a theory of mind/folk psychology – why privilege a particular type of linguistic construction as being the most important part of having a theory of mind?

Hey Evan. I’m a big fan of Butterfill and Apperly’s proposals. I think the kinds of critiques of behavioral surrogates to which you refer are unfair, and presuppose a false alternative: infants either track patterns specifiable in terms of concrete properties of behaviors that we, as adult scientists, notice (e.g., the arc of an arm motion), or they attribute internal, unobservable, causally potent states. As Kim Sterelny and others have pointed out, you can attribute abstract, relational properties without considering them to be internal, unobservable causes. For example, teleological reasoning involves the attribution of goals, i.e., relations to future observable states of affairs, without necessarily conceiving of these as internally represented and causally potent. All perception involves abstraction, i.e., grouping states, events, objects, into equivalence classes that don’t necessarily depend on their concrete properties. Even categories like “red” are abstract in this sense – if we look at all the things people call “red”, they’re a pretty heterogeneous bunch at the concrete physical level. But no one’s tempted to claim that classifying something as red involves speculation about unobservable causes. Similarly, in my view, for categories like “goal”, “efficient means”, and “information access”. These are abstract yet perceptible properties of behaviors, that are used to form equivalence classes that that look pretty heterogeneous if we just look at concrete physical properties of their members, like the arc of an arm motion. But they don’t necessarily involve speculating about unobservable, internal causes. I think if we conceive of infant social cognition in terms of the application of such abstract yet perceptible categories, we can account for the data in a non-post-hoc way, without committing to the view that infants mentalize in the full-blown sense of attributing hidden, internal, unobservable, representational causes.

Hey Tad,

I agree, that would be a set of false alternatives, but I don’t think the inference to the best explanation argument I proposed is undermined even when we introduce the possibility that Baillargeon et al.’s infants are really representing abstract, relational properties. I just think that such explanations haven’t really had very much success when compared with the mentalistic alternatives. Maybe they will at some point, but the state of the art research seems to favor a more mentalistic approach.

I’m curious about how you (and Kristin!) would explain the following case:

Senju et al. 2011, “Do 18-month-olds really attribute mental states to others?”

(https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3799747/).

Here, infants in the trick blindfold and blindfold conditions saw the *exact same* video, but only infants who experienced the opaque blindfold looked towards the last known location of the toy. If there are literally no observable differences between what these two groups of infants saw, but their expectations about the experimenter’s behavior were nonetheless modulated by their previous experiences, doesn’t it stand to reason that they are making some inference from their own experience to the experience of the experimenter?

My question is, what is the non-mentalistic, abstract, relational story here? How is this anything but an inference about an unobservable state internal to an agent? And if there is a relational story to be told, why should we prefer it over the alternative?

Hi Evan. Thanks for the nice case!

It seems to me that infants need only a flexible notion of information access here, not a notion of internal, unobservable, representational states with causal powers. They learn from their own experience that one blindfold permits and the other does not permit access to some kinds of information. They then predict that those with different blindfolds will behave in different ways, depending on the kind of information access they have. I think there is far more to our concept of belief than mere information access.

Of course, you might complain that I’m offering interpretations which become unfalsifiable. In a sense that’s true. I think empirical evidence alone underdetermines which of the interpretations is right. But there are broader theoretical reasons for preferring my sparse to your rich interpretation. For one, on my view of what PAs really are, anyone who has mastered PA concepts must appreciate that the exact same, counterfactually robust pattern of behavior might be caused by completely different sets of PAs. Not sure how you’d test for that in infants. Also, on my view, it is not a mystery why 18 month olds pass the kind of experiment to which you refer, but then can’t pass elicited response FB tasks until 4. Recent evidence confutes the idea that kids’ executive functioning deficits explain this. This is because simplified “Duplo” versions of the FB location task show that there is no true belief bias to be inhibited (see work by Paula Rubio-Fernandez and Bart Geurts). Also, there’s lots of recent evidence that most successes on spontaneous response FB tasks do not predict later success on elicited response FB tasks (the only exception is for location versions of this task). Finally, as I mentioned on the other thread, there’s evidence from recent cross-cultural work on FB tasks (in Samoa) that the time at which children pass the elicited response task varies massively, depending on cultural factors (https://jbd.sagepub.com/content/37/1/21). These sorts of data support dual (or multiple) systems accounts, rather than the unitary account that I think is presupposed by rich interpretations of the infant data.

And I will add that even if there is experience projection going on in the infant case, this could be perceptual rather than propositional, which is what Tad is looking for. Of course there are also nonmentalistic explanations possible here too, like what I offered in my 2005 M&L paper–the opaque blindfold is the can’t do things with it blindfold, and just like you shouldn’t beg from someone who can’t do things you shouldn’t follow someone who can’t do things to find something interesting. And I agree that we’re looking for an overhypotheses to explain the body of data, but I also want to have evidence that comes from another direction. Prediction–moved objects, unexpected contents, and goggles/buckets–has been a mainstay for a generation!

Hi Tad,

I’m less concerned about how to use the phrase “PA attribution” than I am with wondering what more you get with the rich set of capacities you want to associate with the phrase. As I recall, you think that infants are mindshapers at an early age, but not mindereaders (until about 4?). But you think no apes are mindshapers. I think that infants and apes do try to get others to match others’ behaviors, and that the heuristics get us a long way. So they would both be mindshapers.

As I recall you think that we get PA attribution from the introduction of verbal promises. But I’m not sure why the language matters to you, since nonverbal promises are also possible. The scout was nonverbally promising that he’d look for game. When he fails, a group that only applies the intentional stance can think about counterfactual behavior patterns, can’t they? And isn’t this all they need to get the same results as the PA attributor who asks “what do they really believe and desire?”

And, the unreliable chimpanzee groomer made a promise by engaging in one pattern of behavior (e.g. soliciting grooming) but violated expectations by never grooming in return. There is evidence of retaliation against such “promise breakers” among chimpanzees, without a word.

If promises don’t need to lead us to PA attribution, then why do we (occasionally) do it? I’m still trying to figure out what we–the folk–get that we couldn’t get in other ways. Or do these cases not count as promises?

Hey Kristin –

Great questions. First – I agree that nonhumans mindshape. It’s acknowledged all over Ch. 2. I just claim there are certain kinds of mindshaping that are exclusive to humans – overimitation, pedagogy, norm enforcement, and self-constitution in terms of narratives. A lot of what you write about (if it’s borne out in observations) will force me to change my mind on pedagogy and norm enforcement I think, but that’s ok by me! It’s just an empirical hypothesis. I do think it’s less likely that we find nonhumans overimitating, to the extent humans do at least, and certainly unlikely we’ll find them engaging in self-constitution via publicly available narratives.

As for the stuff on promising and PA attribution – I think you missed the point of my argument (probably my fault!). The point isn’t necessarily that you need language to make promises and excuse reneging. I assume that’s true, but am open to empirical disconfirmation. Rather, the kinds of promise reneging (and other norm violations) that call for justification specifically in terms of full-blown PA attribution will require language. My whole goal in that final chapter is to find something for full-blown PA attribution to do. If you’re right that there are nonlinguistic practices of promising and justification among nonhumans, then that’s another thing we don’t need full-blown PA for. But we do have it. So why might we need it? Well why might we want to justify apparently anomalous behavior in terms of states with exactly the properties of natural language sentences? Here’s my answer: once there’s a specifically discursive practice of commitment making (and consequently breaking), then the only way to rescue status is in terms of covert commitments to other discursive items, i.e., PA attributions.

This doesn’t imply that all promise keeping need be discursive or that all promise breaking requires PA attribution to justify. It just implies that insofar as we do use full-blown PA attribution to justify behavior, it presupposes a specifically discursive form of promise making, and other forms of commitment.

Whad’ya think?

Hey Tad, thanks for this. Sorry I’m so slow to reply…I thought sabbatical would be a good time to take a yoga teacher training course, and now I’m busier than during a normal term!

First, sorry too about my misattributions. When I read your book I kept a list of all the things you said that other animals don’t do, and I forgot to look at it before boldly stating an untruth! And, my students and I continued to brainstorm about why you stressed the uniqueness claims, since it was never clear that your argument needs them. That’s even more so if you accept that we can promise, and repair failed promises, nonverbally. (We might need a more complex communication system than any other animals have, but with pantomime it could be done.)

As I read you, we’re pretty much on the same page about what use PA attribution gets us. We both think that the origin story is going to be something about how PAs are used to explain our behavior in terms of invisible causes in order to repair our status in our community. And we both think it promotes community cohesion to have this tool. Is that right?

But the difference might be that I think it’s enough to explain our behaviors in terms of IS-beliefs rather than full-blown PA beliefs. Since the patterns of behavior associated with the IS-belief attribution is going to be part of larger patterns (given rationality and all that) we should get all the predictive power we need, no? And when we lack some predictive power, as soon as the event occurs we have another data point for our pattern, and can modify it accordingly. Or am I STILL missing your argument? Probably. 🙂

Sounds right to me. But, I ask, then why do we need full-blown PA attribution at all? Because some of our status repair has to do with failing to live up to specifically discursive norms, so we need to attribute hidden commitments to other discursive claims to repair status.

Also, I disagree that prior to language status repair needs to refer to invisible causes. I suppose if you’re right that nonhumans try to repair status, they might need to show that their apparently anomalous behavior fits one pattern rather than another. But a strong notion of invisible causes for me requires appreciating the possibility not just that we’re assimilating some behavior to the wrong pattern of appearances, but also the possibility that two indistinguishable patterns of appearances issue from radically different invisible etiologies. The reason language is necessary her, in my view, is that when you have language, there is always the possibility that the commitments you continue to discursively express are at odds with the behaviors in which you continue to engage. And then the question of which interpretation is correct arises – should we treat your explicit commitments as the best guide to interpreting your behavior, or your actual behaviors? Without a language for expressing commitments that may be at odds with actual behavioral dispositions, this question does not arise, in my view.

Tad, I think I get it! On your origin story the IS only fails once we have language, so there is no need for PA attribution until we have language? Is that the story? What I really want, though, is an example!

That’s almost right. It’s not that it fails though. It’s that it comes up against an alternative interpretive heuristic (taking people at their word) that sometimes yields conflicting conclusions. Examples are legion. Akrasia, absentmindedness, whenever you don’t act the way you say you will. I’m drawing on Dennett’s early anticipation of the system 1 / system 2 distinction (inspiring Frankish’s treatment in Mind and Supermind) in Brainstorms. I think the chapter is called “How to Change your Mind”. There Dennett draws a distinction between beliefs (what I would call beliefs*, since they’re what we attribute from the intentional stance, hence not real beliefs), and opinions (claims in language you express support for). He thinks cases of akratic action involve a conflict between these. You endorse the claim that smoking is bad, but your behavior is best rationalized by the assumption that you think smoking is good. Now I don’t want to say that either of these interpretive strategies necessarily gets are full-blown PAs. My point is just that once you have these conflicting interpretive strategies, the question of what someone *really* thinks starts to make sense.

Thanks Tad. I guess I’m not too motivated to follow you because I’m suspicious that there is an answer to what someone really thinks in these cases. I like Eric Schwitzgebel on this issue. And when I teach his in-between belief paper, my students are usually pretty sympathetic once they get it. Which means that indeterminism about belief might be consistent with folk psychology…

Yup. That’s the other way to go. I just decided to assume philosophical consensus was right on this one, but it may be that commonsense is more comfortable with indeterminacy than I and many others assume. I’ve thought this would be a nice issue to investigate using XPhi methods, but I’ve had trouble composing a vignette that might get at the issue. Maybe some variant of Dennett’s case of Sam the famous art critic, and his mediocre artist son?

Right, I thought we had talked about this! 🙂 I’m no survey writer, but one could give people the stories that Eric uses in his papers, and as whether Target:

believes p

believe ~p

believes both p and ~p

believes neither p nor ~p

My students are often happy to say that the Target believes both! So much for consistency…

Perhaps we did! I don’t recall. That would be very cool! Which Schwitzgebel cases do you have in mind? Is it published somewhere?

In his 2001 paper “In between believing” Eric gives 3 cases:

-Fading memory case: ES used to know a person named Konstantin when he was 20, but now forgot his last name.

-Failure to think through: R says that the primes are all positive numbers divisible only by themselves and 1.

-Variability with place and mood: A thinks & feels that there is a God at some times, and doesn’t at others.

and there are many more examples in his 2002 paper “A phenomenal, dispositional account of belief”

Both papers are available on his website.

Thanks!